Service Unavailable Were Sorry

TL;DR:

The 503 response from the IIS machine, Service Unavailable, is the result of repeated application crashes. Since the w3wp.exe worker procedure, created by IIS to execute a web application, is crashing oft, the respective IIS application puddle is turned off. This is a feature of IIS, at Application Pool level, called Rapid-Fail Protection. It helps prevent consuming valuable system resource creating a worker procedure that crashes anyway, presently after spawning.

- Evidence of repeated w3wp.exe crashes and Rapid-Fail Protection may be found in Windows Events , in the Organization log with Source=WAS .

- Evidence of what causes the w3wp.exe to crash may be establish in Windows Events , in the Application log: second-chance crashing exceptions with w3wp.exe.

Collecting the IIS bones troubleshooting info helps expedite investigation for the root cause of application crashes. Follow the steps at http://linqto.me/iis-bones-files for instructions.

In many cases, it may exist needed to collect retention dumps to study the exceptions causing the crash: see commodity at http://linqto.me/dumps.

If we're looking at the reference list of responses that IIS could transport, an HTTP response status 503 means Service Unavailable . In virtually of the cases, nosotros have a 503.0, Application pool unavailable ; and when we bank check the respective application pool, it shows " Stopped ".

Believe it or not, that is actually a characteristic in IIS acting: the Rapid-Neglect Protection . If the hosted application is causing the crash of its executing process for 5 times in less than 5 minutes, then the Application Puddle is turned off automatically. These values are the default values; but you get the point.

But why turning off the application puddle at all? Why is IIS doing that?

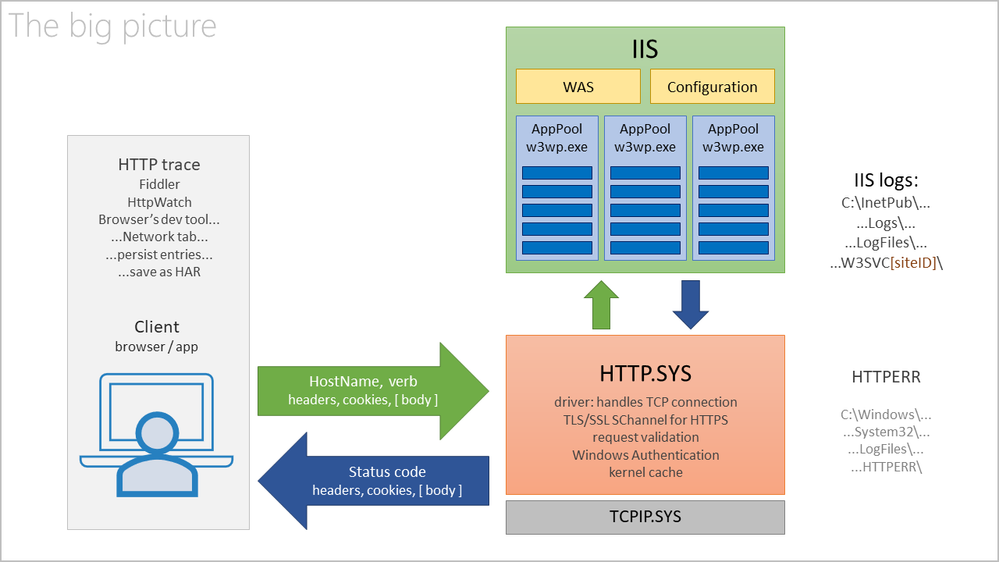

You see, there is an IIS component chosen WAS, Windows (Process) Activation Service, that is creating and so monitoring the worker processes – w3wp.exe – for application pools. These are the IIS processes that are loading and and then executing the Spider web apps, including the Asp.Net ones. These are the processes responding to HTTP requests.

If a worker process crashes, WAS would immediately try to create a new procedure for the Application Puddle; because the Web apps needs to continue serving requests. But if these processes are repeatedly crashing, before long after being created, WAS is going to "say":

I keep creating processes for this app, and they crash. Information technology is expensive for the system to create these processes, which crash anyway. So why don't I stop doing that, marking the Application Puddle accordingly (Stopped), until the administrator is fixing the crusade. Once the condition causing the crashes is removed, and so the administrator may manually re-Offset the application pool.

This WAS is reporting stuff in Windows Events, in the System log. And then, if nosotros open the Events Viewer , get to System log and filter by Event Sources=WAS , we may come across a pattern like this:

There is a pattern to look for: Usually 5 Alert events 5011, for aforementioned application pool name, within a brusk time frame; if the PIDs, Process ID, in the Alarm events are irresolute, information technology is a sign that the previous w3wp.exe instance was "killed", it ended for some reason:

A procedure serving awarding pool 'BuggyBits.local' suffered a fatal advice mistake with the Windows Process Activation Service. The procedure id was '6992'. The data field contains the error number.

... and so followed by an Error outcome 5002 for that aforementioned application pool name:

Application pool 'BuggyBits.local' is being automatically disabled due to a series of failures in the process(es) serving that application puddle.

This behavior – turning off the application pool – is also seen when WAS tin can't start the worker process at all. For case when the application pool identity has a wrong or non-decipherable password: WAS can't (repeatedly) start the w3wp.exe with the custom account that was set up for the application pool. In Windows Events we would see Warnings/Errors from WAS like:

Event ID 5021 when IIS/WAS starts: The identity of application pool BuggyBits.local is invalid. The user name or password that is specified for the identity may be incorrect, or the user may not have batch logon rights. If the identity is not corrected, the application pool volition be disabled when the application pool receives its outset request. If batch logon rights are causing the problem, the identity in the IIS configuration store must exist changed after rights accept been granted before Windows Process Activation Service (WAS) can retry the logon. If the identity remains invalid after the outset request for the application pool is candy, the application puddle volition be disabled. The information field contains the error number.

Event ID 5057 when app is beginning accessed: Application pool BuggyBits.local has been disabled. Windows Process Activation Service (WAS) did not create a worker process to serve the awarding pool because the application pool identity is invalid.

Event ID 5059 , service becomes unavailable: Awarding pool BuggyBits.local has been disabled. Windows Procedure Activation Service (WAS) encountered a failure when it started a worker procedure to serve the application pool.

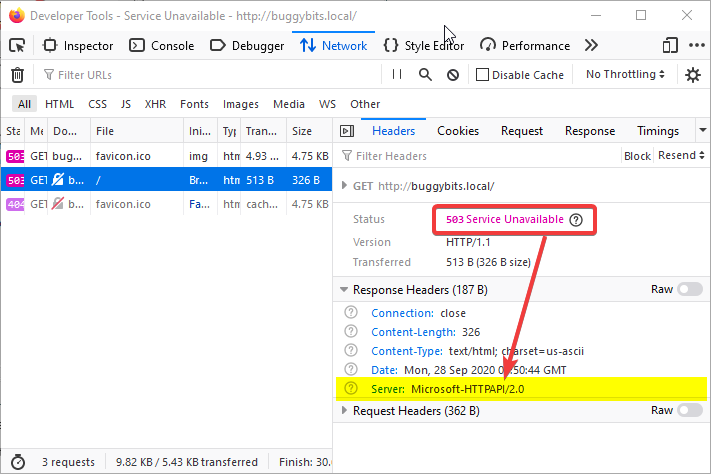

When the application puddle is turned off, hence we don't have a w3wp.exe to process on requests for it, we're having the HTTP.SYS driver responding condition 503 instead of IIS. Remember that IIS is just a user-mode service making use of the kernel-level HTTP.SYS driver?

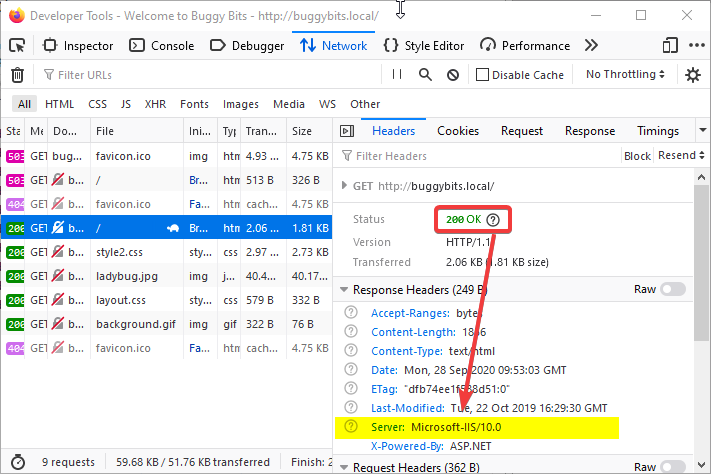

With a normal, successful request, we have the IIS responding:

But when the application puddle is down, the request does not even reach IIS. The response comes from HTTP.SYS, not from the w3wp.exe:

Without a w3wp.exe to process the requests which arrived for an awarding pool, HTTP.SYS, while acting every bit a proxy, basically says:

Wait, dear client, I tried to relay your asking to IIS, to its w3wp.exe process created to execute the called app.

But the app or its configuration is repeatedly crashing the worker process, so creation of new processes ceased.

Hence, I had no process where to relay your request; there is no service ready to process your request.

Sorry: 503, Service Unavailable.

I recall of an IIS awarding puddle equally:

- A queue for requests, in the kernel, maintained the HTTP.SYS driver; run across information technology in a command-line console with

netsh http show servicestate

- Settings on how to create a w3wp.exe IIS worker process and how information technology should behave.

- This process will load and execute our web application, most commonly an Asp.Net/NET Framework application.

Every bit with all applications, exceptions happen, unforeseen errors. They all start as first-chance exceptions.

- Most of the first-hazard exceptions are handled and they never injure the app or its executing process.

They are handled either by the code of the application, or by the Asp.Cyberspace framework itself.- If the unhandled exception happens in the context of executing an HTTP asking, the Asp.Net Framework will exercise its best to care for (handle) it past wrapping information technology and generating an error page, usually with an HTTP response condition code of 500, Server-side execution mistake.

The exception that was not handled by the developer'southward code gets handled past the underlying framework.

- If the unhandled exception happens in the context of executing an HTTP asking, the Asp.Net Framework will exercise its best to care for (handle) it past wrapping information technology and generating an error page, usually with an HTTP response condition code of 500, Server-side execution mistake.

- Some exceptions are unhandled, not treated by any code in the procedure, so they become the and then-chosen second-chance / process-crashing exceptions .

- When these happen, the operating arrangement simply terminates the procedure that generated them; all the virtual memory of the process is flushed away into oblivion.

- Exceptions occurring exterior the context of a asking processing (such as during application startup) have more chances to become second-chance, crashing exception.

Fortunately, these exceptions – showtime-chance or 2d-chance – may exit traces. The first place to expect at is in the Windows Events, Application log.

As an illustration, the code of my awarding running in the BuggyBits.local awarding pool generated the following:

Since the exception could non be handled by the Asp.Net, it immediately became a second-chance exception, causing Windows to terminate the w3wp.exe in the same 2d:

If you notice the fourth dimension-stamp in the capture higher up, it corresponds to a Alert event from WAS in the System log, telling that the worker process did non respond (Img two).

This response does not refer to the HTTP asking/response; it refers to pings from WAS.

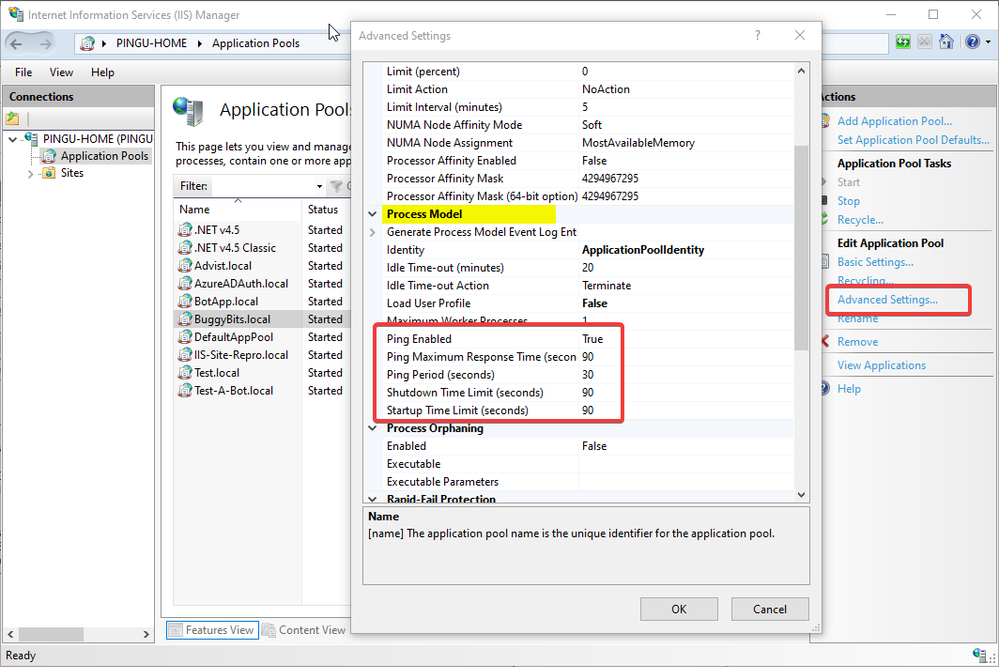

Remember that I said WAS is creating the worker processes, w3wp.exe, but too it monitors them. It has to know if the worker procedure is healthy, if it can still serve requests. Of course, WAS is not able to know everything about the wellness of a w3wp.exe; many things could go wrong with the code of our app. But at least it can send pings to that procedure.

Illustrating with the Advanced Settings of an application pool, WAS is sending pings to its instances of w3wp.exe, every 30 seconds. For each ping, the w3wp.exe (that PID) has 90 seconds to reply. If no ping response is received, WAS concludes that the process is dead: a crash or hang happened – no process thread is available to respond to the ping. Notice that we also have other time limits too that WAS is looking on.

It may happen that, even looking in Windows Events > Application log, we still don't know why our w3wp.exe is crashing or misbehaving. I've seen cases where the exceptions are non logged in Windows Events. In such cases, await in the custom logging solution of the web application, if it has one.

Lastly, if we don't have a inkling about these exceptions, if nosotros take no traces left, we could adhere a debugger to our w3wp.exe and meet what kind of exceptions happen in there. Of form, we would need to reproduce the steps triggering the bad behavior, when using the debugger.

We tin can even tell that debugger to collect some more context around the exceptions, not only the exceptions itself. We could collect the call stacks or retention dumps for such context.

One such debugging tool is the command-line ProcDump. Information technology does non require an installation; you lot only demand to run it from an administrative console.

Allow's say I put my ProcDump in E:\Dumps\ . Before using it, I must determine the PID, Process ID of my faulting worker process w3wp.exe:

E:\Dumps>C:\Windows\System32\InetSrv\appcmd.exe list wp Then, I'thou attaching ProcDump to the PID, redirecting its output to a file that I tin can after audit:

E:\Dumps>procdump.exe -e 1 -f "" [PID-of-w3wp.exe] > ProcDump-monitoring-log.txt

If my Windows has an UI and I'm immune to install diagnosing apps, and so I adopt Debug Diagnostics. Its UI makes it easier to configure data drove, and it determines the PID of w3wp.exe itself, based on the app pool selected. Debug Diag is better suited to troubleshoot IIS applications.

Both Debug Diag and Proc Dump are free tools from Microsoft, widely used by professionals in troubleshooting. A lot of content is available on the Web on how to use these.

A natural continuation to this article is the one I wrote about exceptions, exception handling, and how to collect memory dumps to capture exceptions or performance bug:

- Nigh exceptions and capturing them with dumps

Bated : Just in case yous are wondering what I employ to capture screenshots for illustrating my articles, check out this little ShareX awarding in Windows Store.

Source: https://techcommunity.microsoft.com/t5/iis-support-blog/http-response-503-service-unavailable-from-iis-one-common/ba-p/1720007

0 Response to "Service Unavailable Were Sorry"

Post a Comment